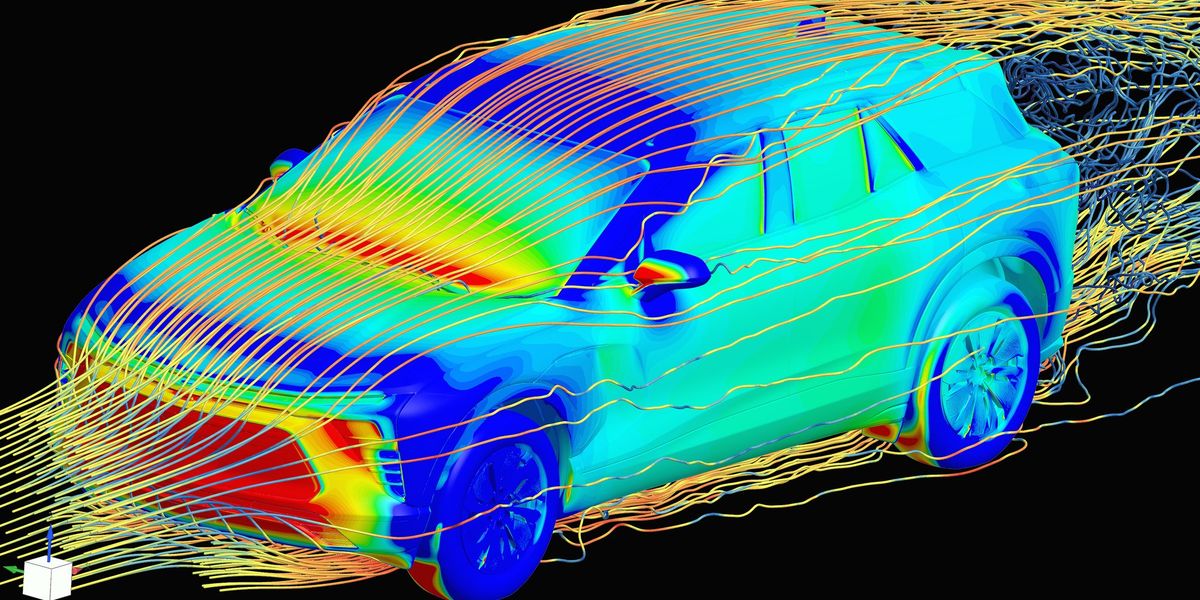

…Previously, a creative design engineer would develop a 3D model of a new car concept. This model would be sent to aerodynamics specialists, who would run physics simulations to determine the coefficient of drag of the proposed car—an important metric for energy efficiency of the vehicle. This simulation phase would take about two weeks, and the aerodynamics engineer would then report the drag coefficient back to the creative designer, possibly with suggested modifications.

Now, GM has trained an in-house large physics model on those simulation results. The AI takes in a 3D car model and outputs a coefficient of drag in a matter of minutes. “We have experts in the aerodynamics and the creative studio now who can sit together and iterate instantly to make decisions [about] our future products,” says Rene Strauss, director of virtual integration engineering at GM…

“What we’re seeing is that actually, these tools are empowering the engineers to be much more efficient,” Tschammer says. “Before, these engineers would spend a lot of time on low added value tasks, whereas now these manual tasks from the past can be automated using these AI models, and the engineers can focus on taking the design decisions at the end of the day. We still need engineers more than ever.”

Then I doubt they are running the mentioned most accurate, two-week-long physics solvers at this stage either. You only do that when you need accuracy. A quick simulation doesn’t take long.

I’m failing to see why the creative writing machine is better than a simulation set to ‘rough’.

The problem is that you saw AI and thought LLM.

Machine Learning is a big field, AI/Neural Networks are a subset of that field and LLMs are only a single application of a specific type of LLM (Transformer model) to a specific task (next token prediction).

The only reason that LLMs and Image generation models are the most visible is that training neural network requires a large amount of data and the largest repository of public data, the Internet, is primarily text and images. So, text and image models were the first large models to be trained.

The most exciting and potentially impactful uses of AI are not LLMs. Things like protein folding and robotics will have more of an impact on the world than chatbots.

In this case, generating fast approximations for physical modeling can save a ton of compute time for engineering work.

Watson beat Ken Jennings over a decade ago. Protein folding was already done too, the people who did it even won a Nobel prize for it a couple years ago.

LLMs being the most visible part of AI after over 75 years of AI, isn’t because they’re the biggest or latest or greatest or whatever, it’s marketing. Plain and simple marketing.

Protein folding is far from “done” lol. Models have gotten a lot better but there is still more to do.

I didn’t say it was finished. I said people had won a Nobel prize for having done it. It takes decades to win a Nobel prize. My point was that it had been done years and years ago, not recently.

I came here to say the same thing. There’s no way a coarse CFD simulation of air over a car takes two weeks to run on even budget HPCs.

I don’t know if this is the full explanation, but the article does touch on how the LPM can be tweaked to match physical tests: